AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Norma walnut benchmark email1/5/2024

The benchmark and code for baselines are available at \url. Join the thousands of athletes who have used the COPA Score to benchmark their technical, physical, and cognitive skills under standardized conditions.

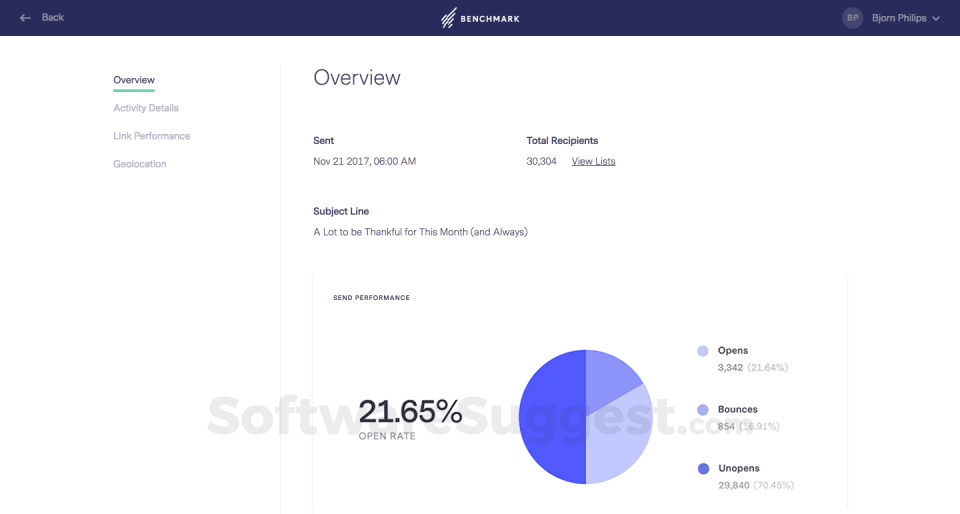

Benchmark Email is a simple and powerful email marketing tool for anyone who needs to send personalized email at scale. Try out our email marketing software for free to see how easy it is to create beautiful, personalized emails, landing pages, and automations. We expect WALNUT to stimulate further research on methodologies to leverage weak supervision more effectively. Send your next email in less than 15 mins. Our results demonstrate the benefit of weak supervision for low-resource NLU tasks and highlight interesting patterns across tasks. We conduct baseline evaluations on WALNUT to systematically evaluate the effectiveness of various weak supervision methods and model architectures. WALNUT is the first semi-weakly supervised learning benchmark for NLU, where each task contains weak labels generated by multiple real-world weak sources, together with a small set of clean labels. WALNUT consists of NLU tasks with different types, including document-level and token-level prediction tasks. Norma Jackson, and with the help of the LF Foundation Principal as Leader. In this paper, we propose such a benchmark, named WALNUT (semi-WeAkly supervised Learning for Natural language Understanding Testbed), to advocate and facilitate research on weak supervision for NLU. Get Norma Walbergs email address () and phone number (860344.) at RocketReach. Email Print Facebook Twitter Pinterest LinkedIn. It is thus hard to compare different approaches and evaluate the benefit of weak supervision without access to a unified and systematic benchmark with diverse tasks and real-world weak labeling rules. Existing works studying weak supervision for NLU either mostly focus on a specific task or simulate weak supervision signals from ground-truth labels. WALNUT is the first semi-weakly supervised learning benchmark for NLU, where each task contains weak labels generated by multiple real-world weak sources, together with a small set of clean labels.

Weak supervision has been proven valuable when large amount of labeled data is unavailable or expensive to obtain. Download a PDF of the paper titled WALNUT: A Benchmark on Semi-weakly Supervised Learning for Natural Language Understanding, by Guoqing Zheng and 3 other authors Download PDF Abstract:Building machine learning models for natural language understanding (NLU) tasks relies heavily on labeled data.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed